An Introductory Robot Programming Tutorial

Let’s face it, robots are cool. They’re also going to run the world someday, and hopefully at that time they will take pity on their poor soft fleshy creators (AKA robotics developers) and help us build a space utopia filled with plenty. I’m joking of course, but only sort of.

In my ambition to have some small influence over the matter, I took a course in autonomous robot control theory last year, which culminated in my building a simulator that allowed me to practice control theory on a simple mobile robot.

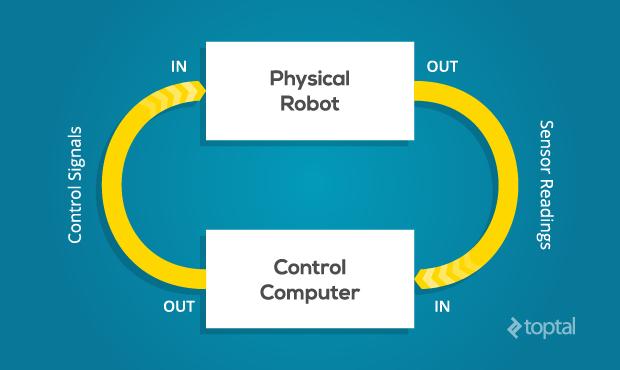

In this article, I’m going to describe the control scheme of my simulated robot, illustrate how it interacts with its environment and achieves its goals, and discuss some of the fundamental challenges of robotics programming that I encountered along the way.The fundamental challenge of all robotics is this: It is impossible to ever know the true state of the environment. A robot can only guess the state of the real world based on measurements returned by its sensors. It can only attempt to change the state of the real world through the application of its control signals.

Thus, one of the first steps in control design is to come up with an abstraction of the real world, known as a model, with which to interpret our sensor readings and make decisions. As long as the real world behaves according to the assumptions of the model, we can make good guesses and exert control. As soon as the real world deviates from these assumptions, however, we will no longer be able to make good guesses, and control will be lost. Often, control once lost can never be regained. (Unless some benevolent outside force restores it.)

This is one of the key reasons that robotics programming is so difficult. We often see video of the latest research robot in the lab, performing fantastic feats of dexterity, navigation, or teamwork, and we are tempted to ask, Why isn’t this used in the real world? Well, next time you see such a video, take a look at how highly-controlled the lab environment is. In most cases, these robots are only able to perform these impressive tasks as long as the environmental conditions remain within the narrow confines of its internal model. Thus, a key to the advancement of robotics is the development of more complex, flexible, and robust models - advancement which is subject to the limits of the available computational resources.

The simulator I built is written in Python and very cleverly dubbed Sobot Rimulator. You can find v1.0.0 here on GitHub. It does not have a lot of bells and whistles but it is built to do one thing very well: provide an accurate simulation of a robot and give an aspiring roboticist an interface for practicing control robot programming. While it is always better to have a real robot to play with, a good robot simulator is much more accessible, and is a great place to start.

The software simulates a real life research robot called the Khepera III. In theory, the control logic can be loaded into a real Khepera III robot with minimal refactoring, and it will perform the same as the simulated robot. In other words, programming the simulated robot is analogous to programming the real robot. This is critical if the simulator is to be of any use.

In this tutorial, I will be describing the robot control architecture that comes with v1.0.0 of Sobot Rimulator, and providing snippets from the source (with slight modifications for clarity). However I encourage you to dive into the source and mess around. Likewise, please feel free to fork the project and improve it.

The control logic of the robot is constrained to these files:

models/supervisor.pymodels/supervisor_state_machine.py- the files in the

models/controllersdirectory

Every robot comes with different capabilities and control concerns. Let’s get familiar with our simulated robot.

The first thing to note is that, in this guide, our robot will be an autonomous mobile robot. This means that it will move around in space freely, and that it will do so under its own control. This is in contrast to, say, an RC robot (which is not autonomous) or a factory robot arm (which is not mobile). Our robot must figure out for itself how to achieve it’s goals and survive in its environment, which proves to be a surprisingly difficult challenge for a novice robotics programmer.There are many different ways a robot may be equipped to monitor its environment. These can include anything from proximity sensors, light sensors, bumpers, cameras, and so forth. In addition, robots may communicate with external sensors that give it information the robot itself cannot directly observe.

Our robot is equipped with 9 infrared proximity sensors arranged in a skirt in every direction. There are more sensors facing the front of the robot than the back, because it is usually more important for the robot to know what is in front of it than what is behind it.

In addition to the proximity sensors, the robot has a pair of wheel tickers that track how many rotations each wheel has made. One full forward turn of a wheel counts off 2765 ticks. Turns in the opposite direction count backwards.Some robots move around on legs. Some roll like a ball. Some even slither like a snake.

Our robot is a differential drive robot, meaning that it rolls around on two wheels. When both wheels turn at the same speed, the robot moves in a straight line. When the wheels move at different speeds, the robot turns. Thus, controlling movement of this robot comes down to properly controlling the rates at which each of these two wheels turn.In Sobot Rimulator, the separation between the robot computer and the (simulated) physical world is embodied by the file robot_supervisor_interface.py, which defines the entire API for interacting with the real world as such:

read_proximity_sensors()returns an array of 9 values in the sensors’ native formatread_wheel_encoders()returns an array of 2 values indicating total ticks since startset_wheel_drive_rates( v_l, v_r )takes two values, in radians-per-second

Robots, like people, need purpose in life. The goal of programming this robot will be very simple: it will attempt to make its way to a predetermined goal point. The coordinates of the goal are programmed into the control software before the robot is activated.

However, to complicate matters, the environment of the robot may be strewn with obstacles. The robot MAY NOT collide with an obstacle on its way to the goal. Therefore, if the robot encounters an obstacle, it will have to find its way around so that it can continue on its way to the goal.We’ve done a lot of work to get to this point, and this robot seems pretty clever. Yet, if you run Sobot Rimulator through several randomized maps, it won’t be long before you find one that this robot can’t deal with. Sometimes it drives itself directly into tight corners and collides. Sometimes it just oscillates back and forth endlessly on the wrong side of an obstacle. Occasionally it is legitimately imprisoned with no possible path to the goal. After all of our testing and tweaking, sometimes we must come to the conclusion that the model we are working with just isn’t up to the job, and we have to change the design or add functionality.

In the robot universe, our little robot’s brain is on the simpler end of the spectrum. Many of the failure cases it encounters could be overcome by adding some more advanced AI to the mix. More advanced robots make use of techniques such as mapping, to remember where it’s been and avoid trying the same things over and over, heuristics, to generate acceptable decisions when there is no perfect decision to be found, and machine learning, to more perfectly tune the various control parameters governing the robot’s behavior.Python

Robotics

Michelle

Michelle